Hello! I'm

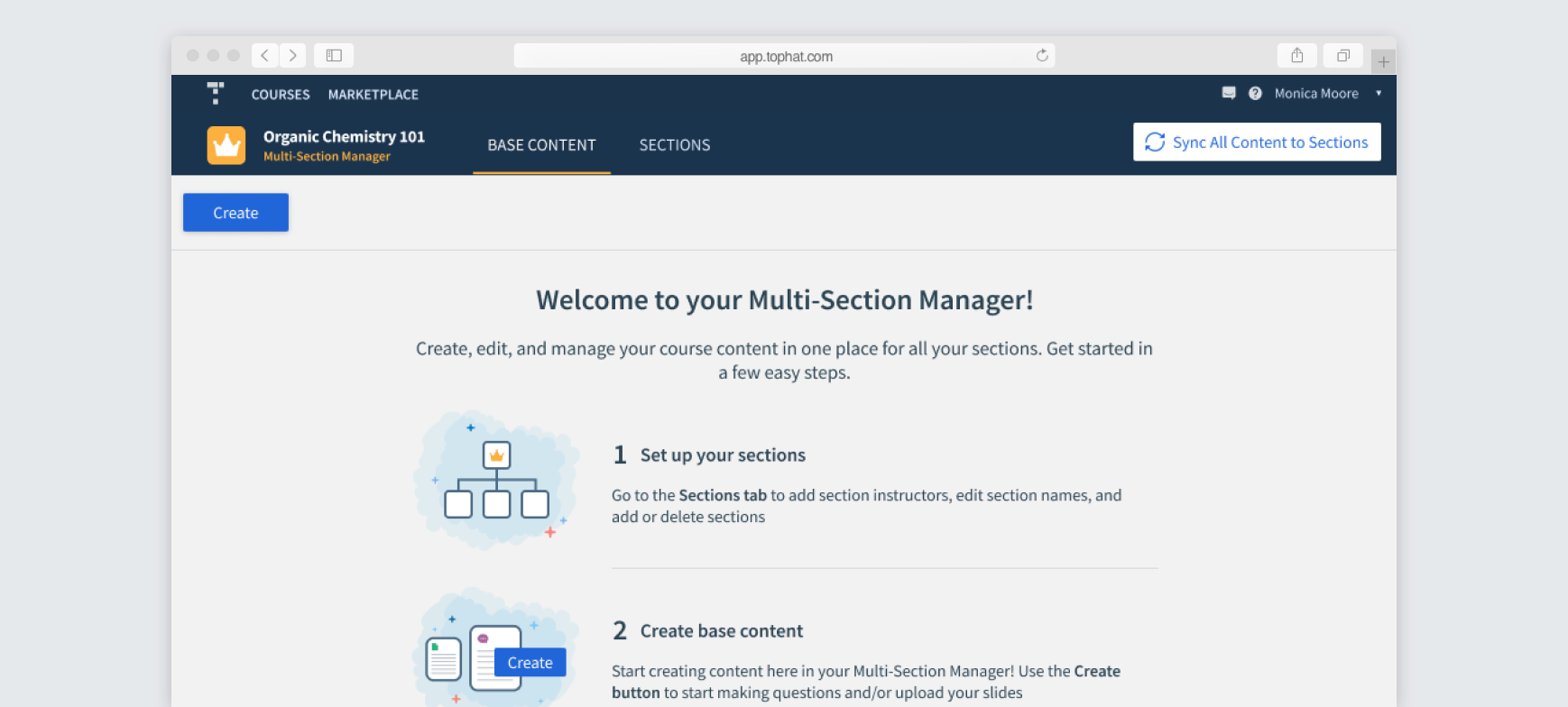

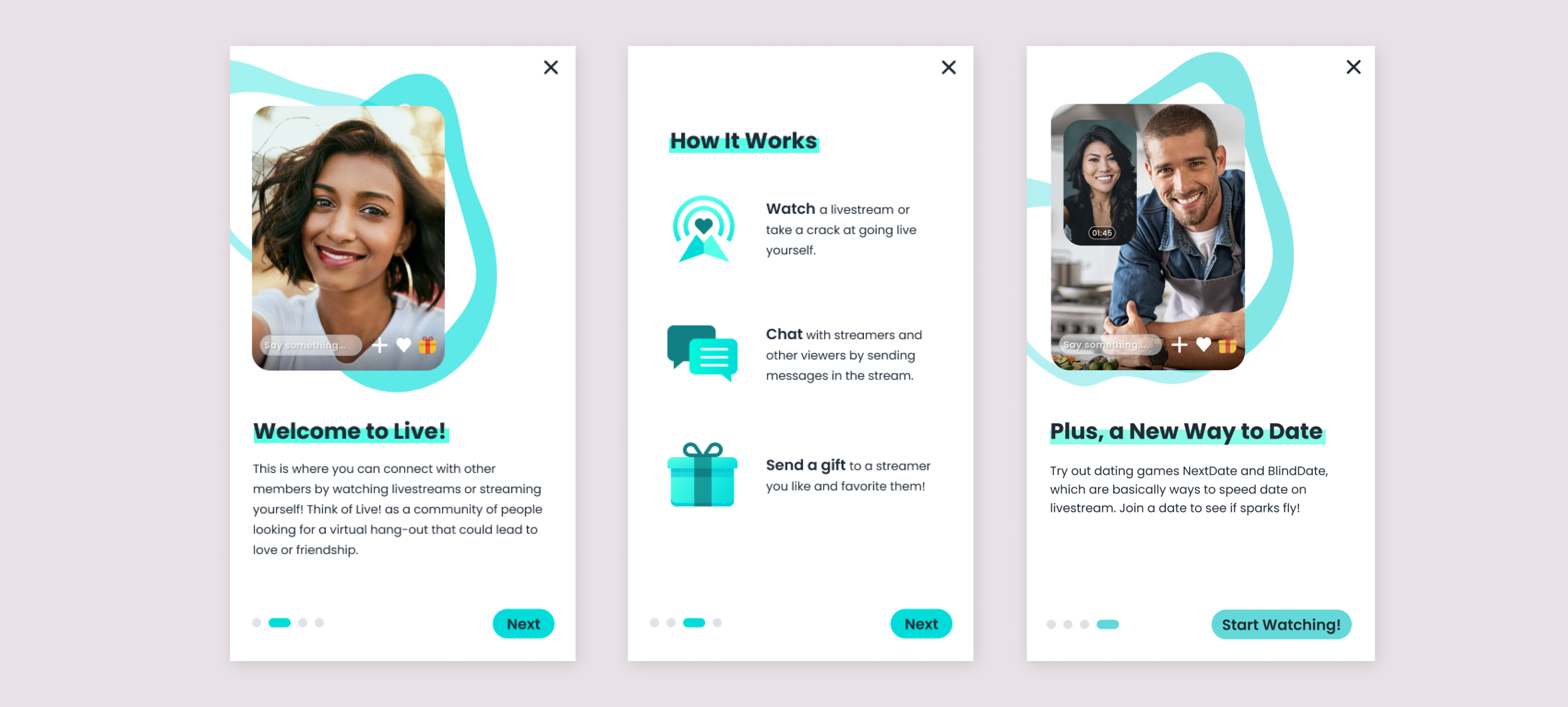

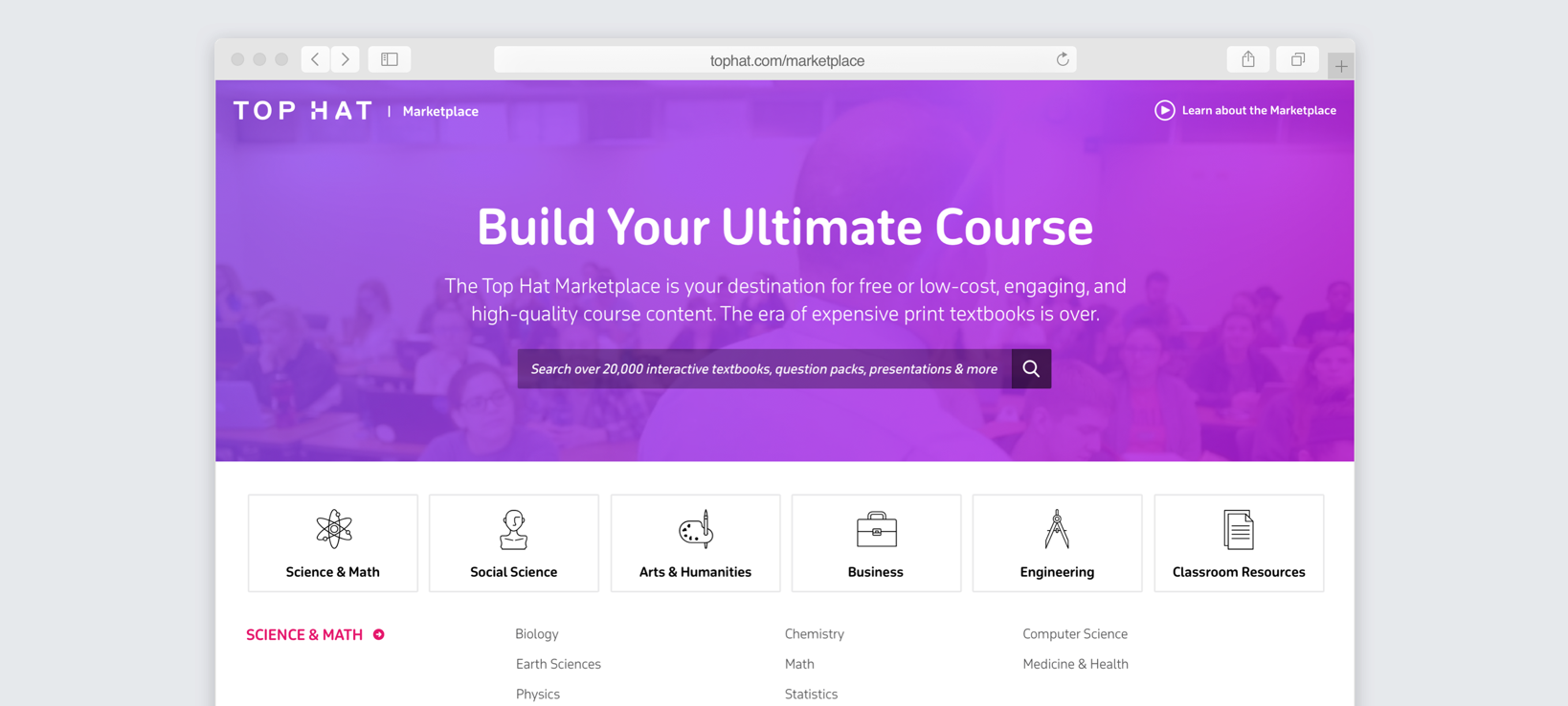

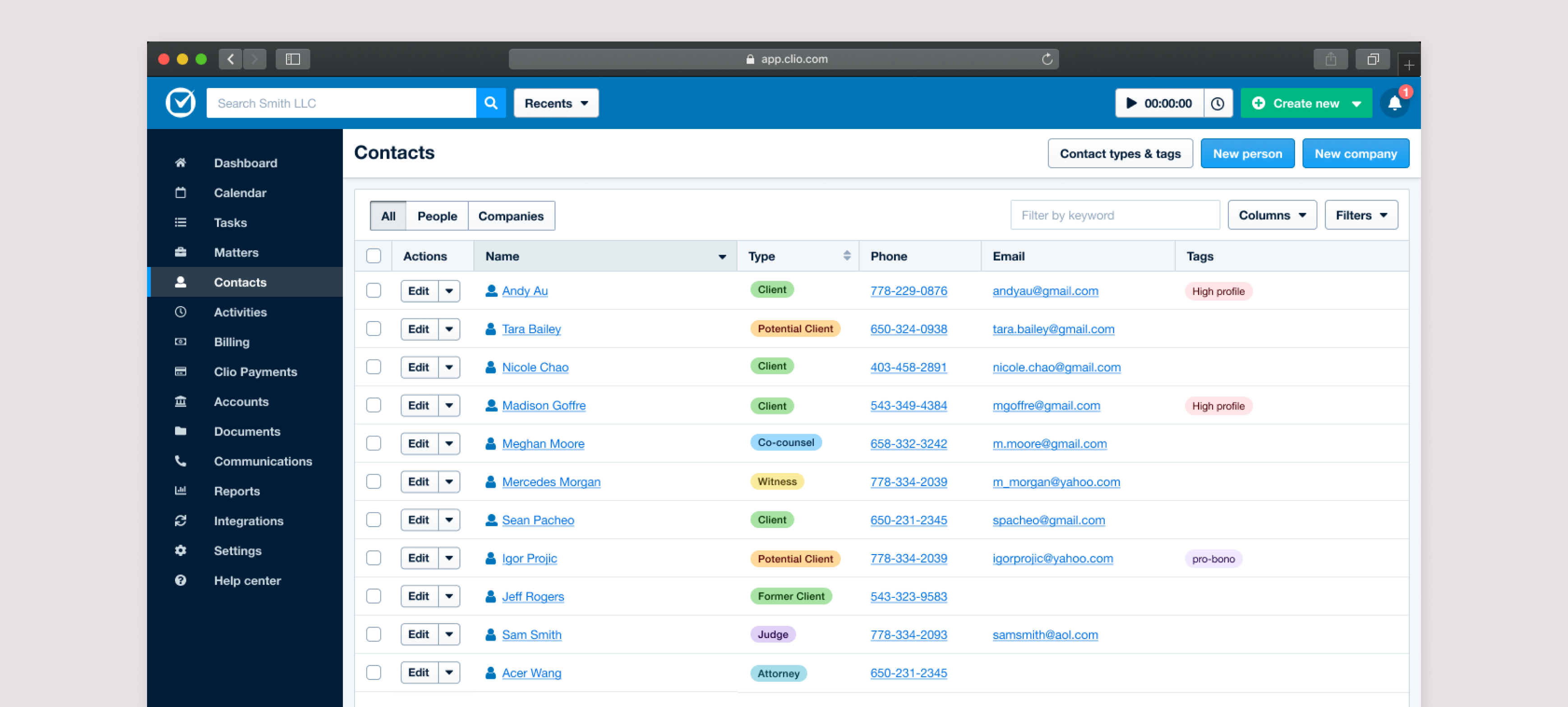

Monica Moore

I’m a product designer with 8 years of experience based in Vancouver, Canada (but am willing to relocate!). What I love most about being a designer is solving complex and interesting problems that have a positive impact on users. I have a strong bias for action and thrive in a fast-paced, high-growth environment, where I can help ship great products!

Scroll onwards to read about some of the exciting projects I've worked on!